Senior Research Scientist — Huawei Noah's Ark Lab

01/2023 — Present

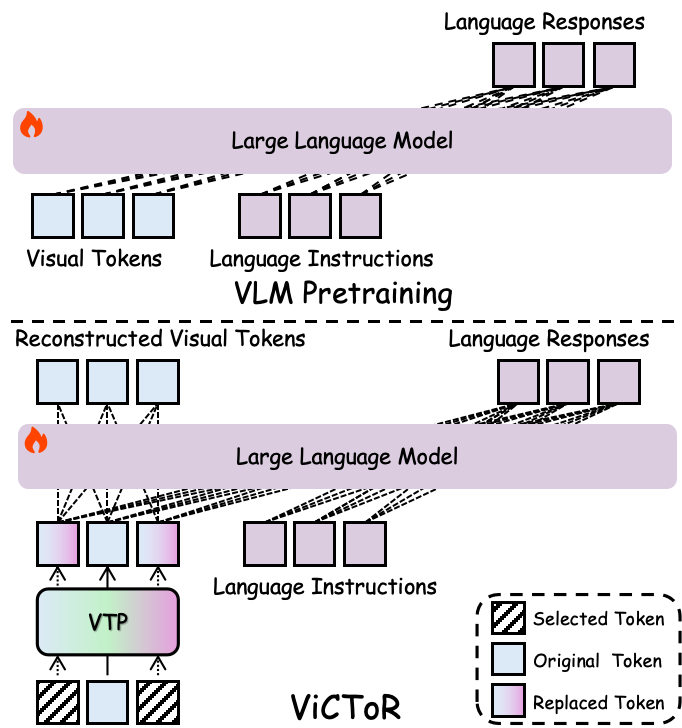

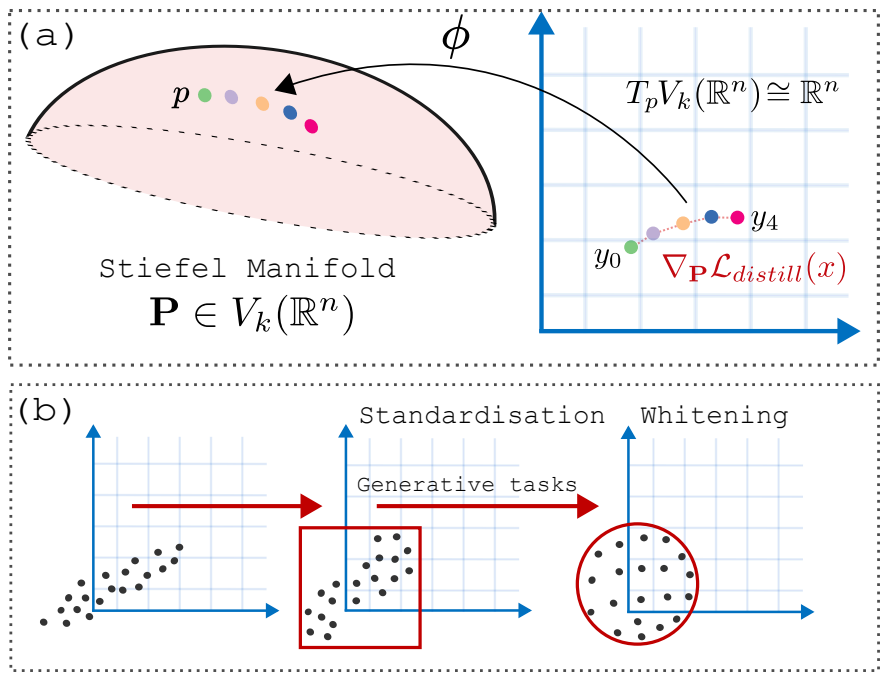

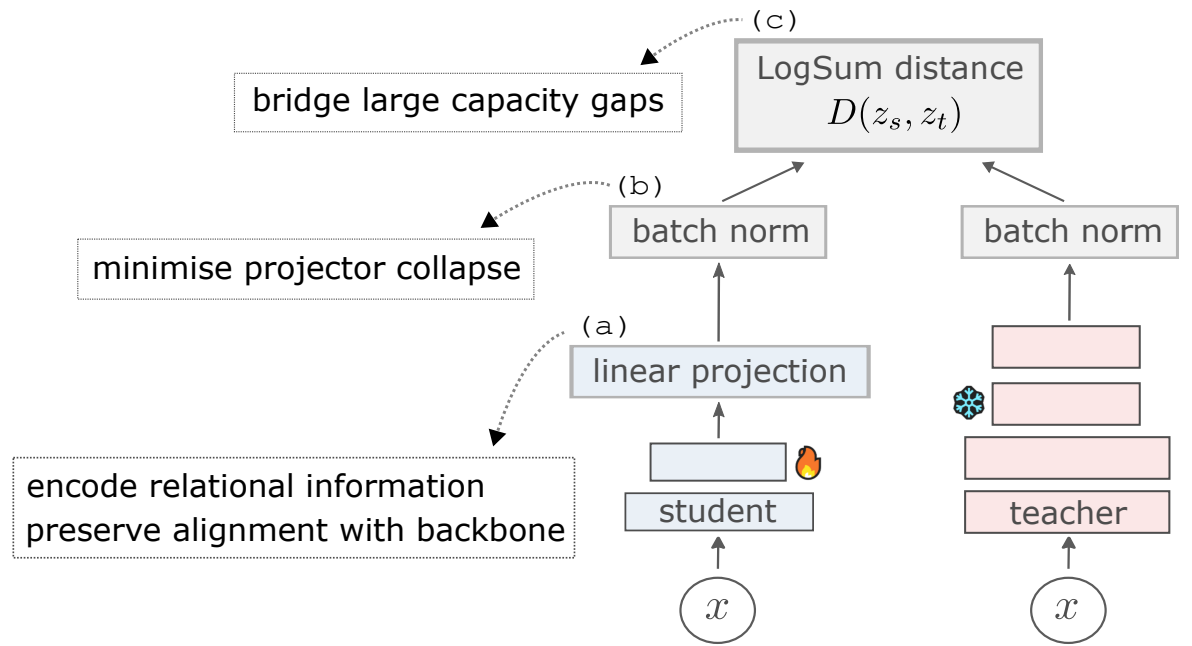

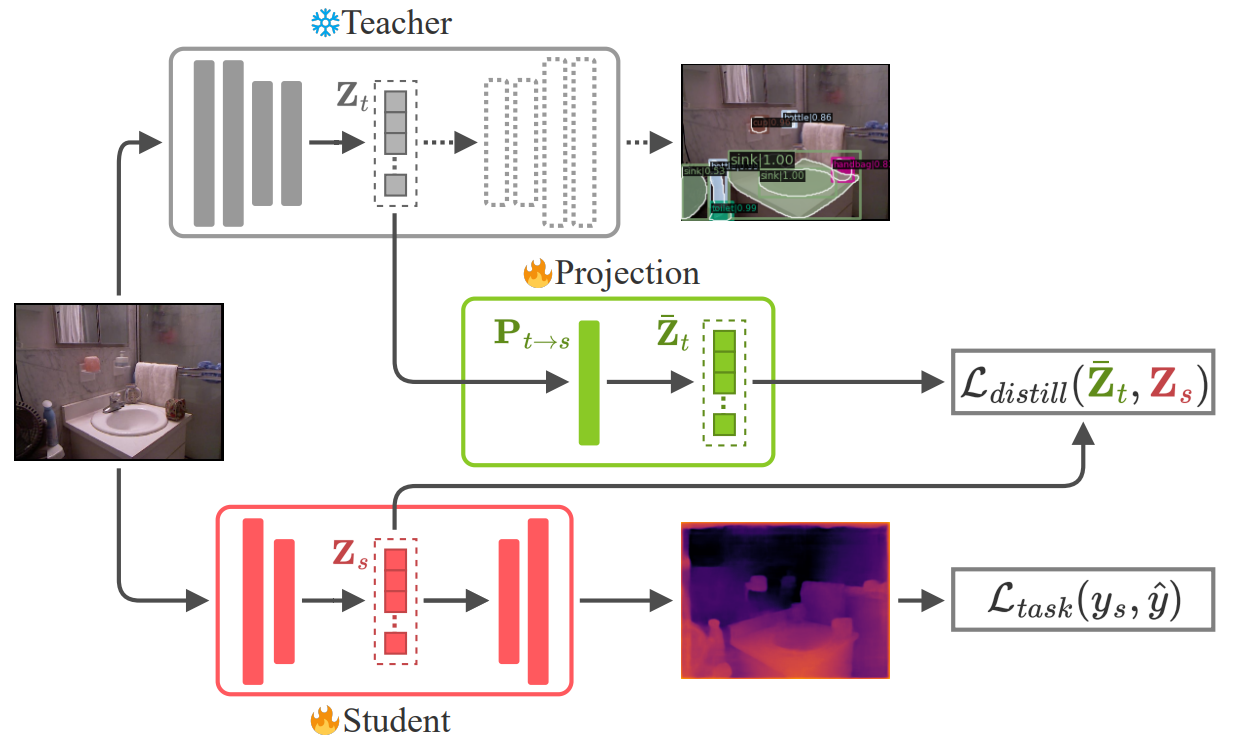

- Foundational research on knowledge distillation for vision-language models.

- Involved in the recruitment and interviews stages for several permanent and internship positions.

- Collaboration and leading several successful research projects.

- Award for outstanding individual contributor in 2024.

- Managed several interns with their topics on multi-modality learning.

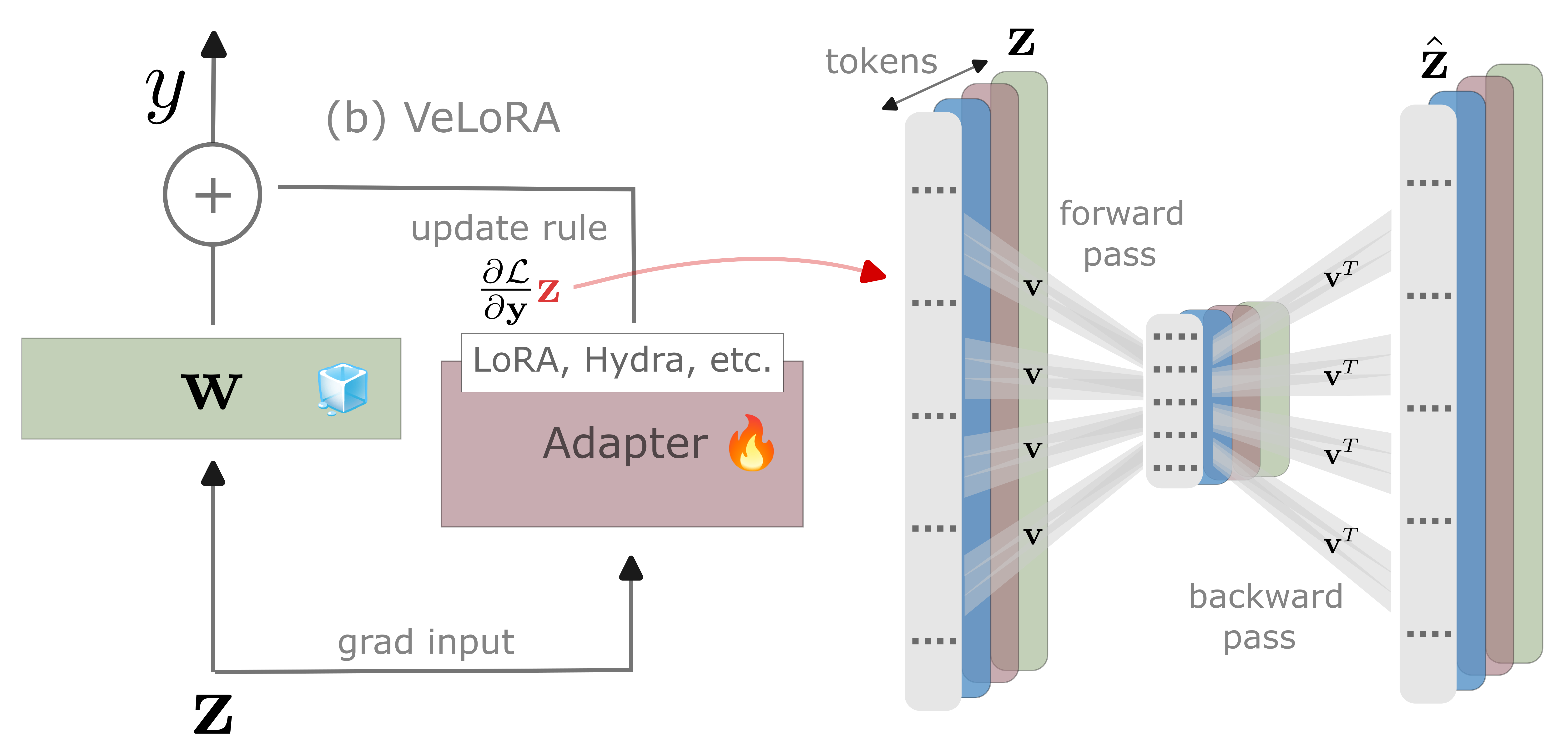

- Integrated and landed research on parameter efficient fine-tuning with the product camera teams.

Research Scientist Intern - Samsung Research UK

06/2022 — 01/2023

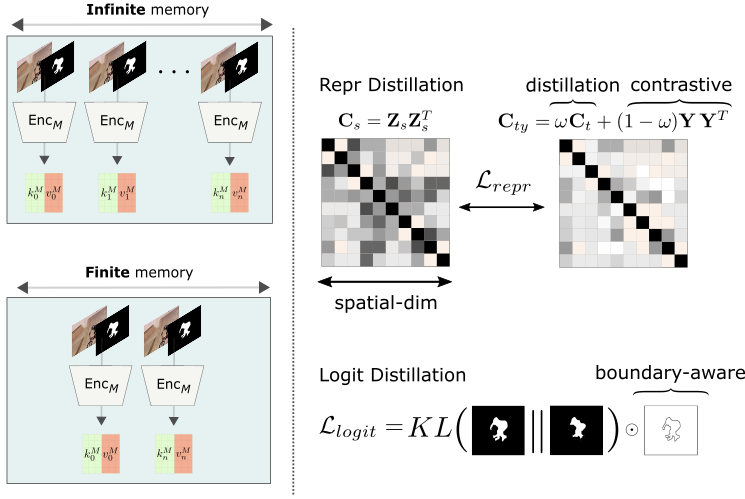

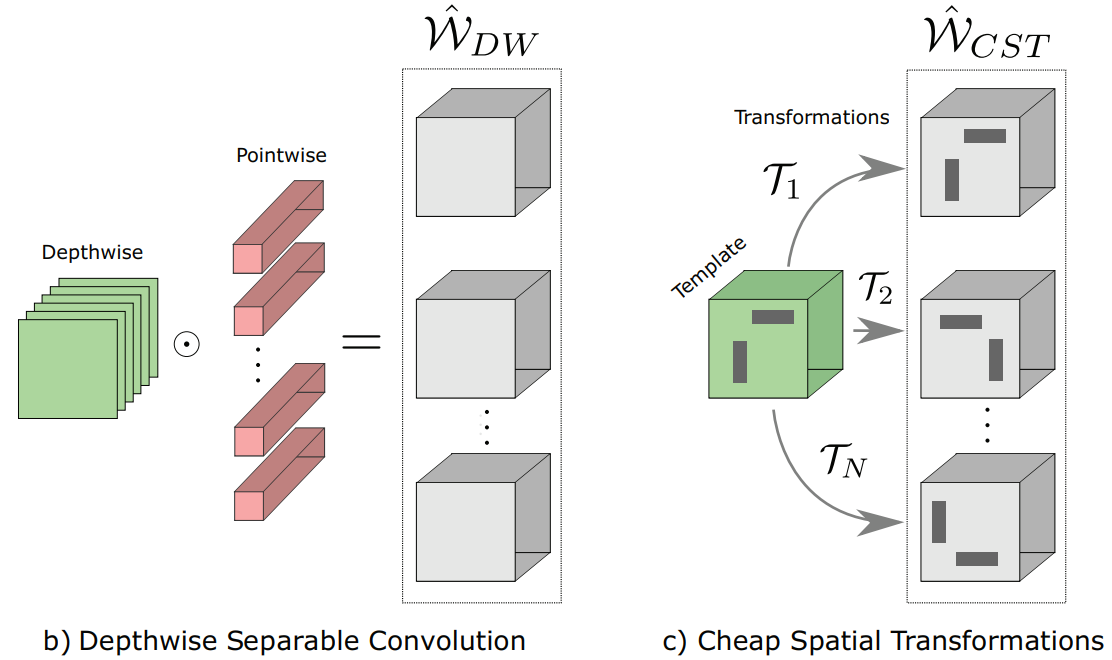

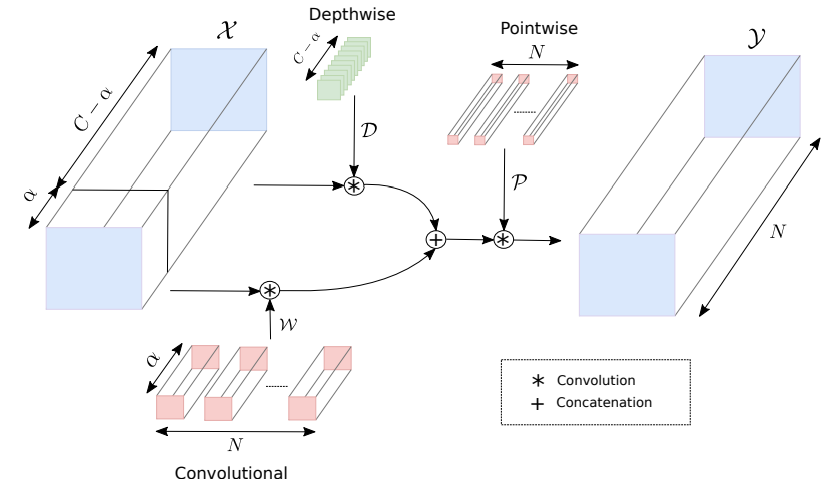

- Semi-supervised video object segmentation for mobile devices

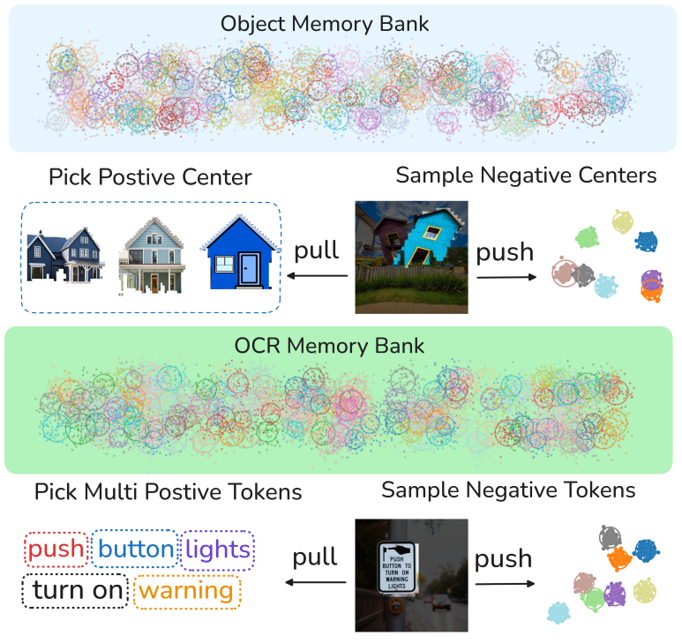

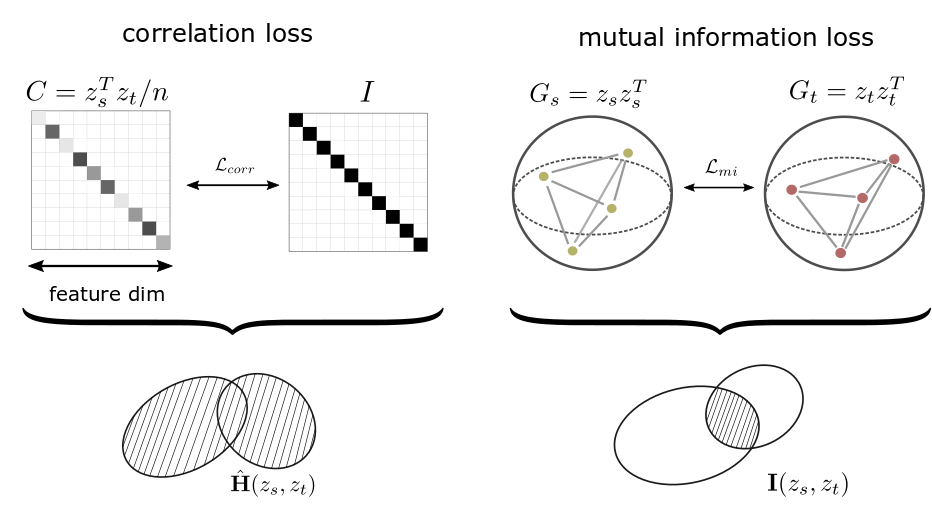

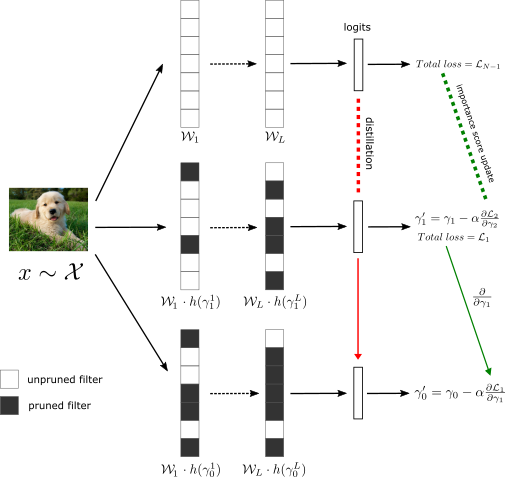

- Novel unification of representation distillation and contrastive learning.

- Achieved competitive performance despite running up to ×5 faster, and with ×32 fewer parameters.

- Integrated research code into Samsung's mobile product.

- SRUK 2023 Best Paper Award for MobileVOS presented at CVPR. [blog]

Software Engineer Intern - Waymont Consulting

06/2017 — 09/2017

- Developed a responsive web GUI with a C++ backend to automate signal processing tests.

- Replaced previously manual testing system and increased efficiency by hundredfold.

Software Engineer Intern - Toshiba Research Europe

06/2016 — 09/2016

- Designed out-of-tree blocks in C++ using the GNURadio signal processing environment.

- Characterised and linearised a communication channel between two USRP devices.

- Utilised the RC-5 Infrared standard for short-range and low-data rate IoT applications.

Software Engineer Intern - Toshiba Research Europe

06/2015 — 09/2015

- Developed a GUI to operate a motor-driven positioner in conjunction with a VNA.

- Measured various antenna patterns within an anechoic chamber.

- VB.NET code to synchronize two X-Y positioners for analyzing propagation channels.